Here is an email a distributor might receive at 7:43 AM on a Monday:

"Hey Sandra, can you do the usual order plus 30 more of the blue 40mm fittings, and check if you have the larger size in that gate valve. Also we need 2 pallets of the 15mm copper pipe, same spec as February. Thanks, Martin"

No product codes. No quantities for the "usual order." A vague reference to "that gate valve." A request to check stock mixed in with an actual order.

A senior CSR named Sandra would handle this in about 6 minutes. She knows Martin, knows his usual order, knows which gate valve he means, and knows the February copper pipe spec. A new hire would take 15 minutes and probably get the gate valve wrong.

Here is what happens when AI processes the same email. Every step below is real, not theoretical, and it happens in under 30 seconds.

Step 1: Inbox Monitoring Detects the Email as an Order

The AI monitors a designated email inbox continuously. When Martin's email arrives, the system does not wait for someone to open it, triage it, or forward it to the right person.

The first task is classification: is this an order, a general inquiry, a complaint, or something else? The AI reads the email body, identifies order-intent language ("can you do the usual order," "we need 2 pallets"), and classifies it as a purchase order.

This classification step filters out the noise. Delivery status questions, pricing inquiries, and promotional replies are routed differently. Only confirmed order-intent emails enter the processing pipeline.

For a distributor receiving 200 emails per day, of which 80 are actual orders, this step alone eliminates the manual triage that typically consumes the first hour of an order desk's morning.

Step 2: AI Reads and Interprets the Email Content

This is where the technical difference between AI order processing and older approaches becomes concrete.

An OCR system or a template-based parser would fail on Martin's email immediately. There is no table to scan. There are no labeled columns. There is no structure at all. It is conversational English with implied context.

The AI applies natural language processing (NLP) to interpret meaning, not match patterns. It parses Martin's email into discrete order signals:

- Signal 1: "the usual order" references historical order data for this customer

- Signal 2: "plus 30 more of the blue 40mm fittings" is an addition to the baseline, with a product described by color, size, and category

- "check if you have the larger size in that gate valve" is Signal 3, a stock inquiry attached to a product referenced by prior conversation context

- Signal 4: "2 pallets of the 15mm copper pipe, same spec as February" combines a quantity, unit, product description, and historical specification reference

Each signal becomes a candidate line item. The stock inquiry (Signal 3) is separated from the order items and routed to a different handling path, since it needs a stock check response, not an ERP entry.

This is where OCR fails. There is no template here. The AI is not scanning a fixed layout. It is reading language the way Sandra reads it, except it does so in 2 seconds instead of 6 minutes.

Step 3: Product Matching Against Your Catalog

Each parsed signal now needs to match to a real SKU in the distributor's catalog. This is the hardest step and the one where most automation historically breaks down.

Martin wrote "blue 40mm fittings." The catalog might list this as:

- SKU: FIT-BR-40-BLU

- Description: "Brass Fitting 40mm Blue PVC Coated"

- Alternate names in system: "40mm blue fitting," "blue coated brass 40mm"

The AI does not need an exact string match. It uses semantic matching, understanding that "blue 40mm fittings" refers to the same product as "Brass Fitting 40mm Blue PVC Coated" by analyzing the meaning of the description components: color (blue), size (40mm), and category (fitting).

For the "usual order," the system retrieves Martin's last completed order and pulls the full line-item list. For "same spec as February," it locates the February order and matches the copper pipe specification.

This matching process handles the real-world complexity that makes distribution order processing so difficult:

- Customer nicknames: Martin calls it "the blue ones." The catalog calls it FIT-BR-40-BLU. The AI bridges the gap.

- "Cu pipe 15mm" matches to "Copper Pipe 15mm Type B" because the AI understands that Cu is the chemical symbol for copper. These abbreviations are handled automatically.

- Unit conversions: "2 pallets" must resolve to a quantity. If the catalog tracks individual units and a pallet holds 50 units, the AI calculates 100 units.

- When Martin writes "the larger size in that gate valve," the AI cross-references his recent order history to identify which gate valve he has previously ordered, then finds the next size up in the same product line. These partial descriptions are among the hardest matching challenges.

How This Differs From Template Matching

A template system would need a pre-built mapping for Martin's specific vocabulary. When Martin changes how he describes products (which customers do regularly), the template breaks. The AI does not use per-customer templates. It interprets meaning from the language itself, combined with catalog data and order history. A new customer sending an order in a format the system has never seen before is processed the same way as a customer with two years of history.

Step 4: Confidence Scoring for Every Line Item

Not all matches are equally certain. The AI assigns a confidence score to each line item, a number between 0 and 1 that represents how sure it is about the interpretation and product match.

Here is what Martin's order looks like after scoring:

| Line | Interpreted Item | Matched SKU | Qty | Confidence |

|---|---|---|---|---|

| 1 | Usual order (12 line items from last order) | [12 SKUs from order history] | [historical quantities] | 0.97 |

| 2 | Blue 40mm fitting | FIT-BR-40-BLU | 30 | 0.94 |

| 3 | 15mm copper pipe, Feb spec | CU-PIPE-15-B | 100 (2 pallets) | 0.91 |

| 4 | Gate valve, larger size | GV-DN50 (?) | 1 (?) | 0.45 |

Lines 1 through 3 score above the confidence threshold (typically set at 0.85). They can proceed to the ERP automatically.

Line 4 scores 0.45. The AI found a gate valve in Martin's order history (GV-DN40) and identified the next size up in the product line (GV-DN50), but the phrase "that gate valve" combined with a vague size request ("larger") introduces genuine ambiguity. Multiple gate valve lines exist, and the quantity is assumed to be 1 because Martin did not specify.

A 0.45 score means: the AI has a reasonable guess, but this needs human confirmation before it enters the ERP.

Why Confidence Scoring Matters

Without confidence scoring, you have a black box. The AI would either guess silently (and sometimes guess wrong, creating errors downstream in fulfillment) or reject everything that is not a perfect match (and send most orders back to manual processing, defeating the purpose).

Confidence scoring creates a third path: informed escalation. The AI handles the work it is confident about, and escalates exactly the items where human judgment genuinely adds value. This is the difference between automation that replaces the team and automation that removes low-value work from the team's plate.

Step 5: Human Review for Low-Confidence Items

Line 4 from Martin's order (the gate valve) lands in a review dashboard. A team member sees:

- The original email text: "check if you have the larger size in that gate valve"

- The AI's best guess: GV-DN50, quantity 1

- The AI's reasoning: Martin ordered GV-DN40 in his last three orders. GV-DN50 is the next size up in the same product line.

- Confidence: 0.45

- Alternative matches: GV-DN65 (next size after DN50), GV-BRASS-50 (different material, same size)

The reviewer sees all the context the AI used to make its guess. They can confirm GV-DN50, select an alternative, adjust the quantity, or flag it for a callback to Martin.

This step typically takes 15-30 seconds per flagged item. Compare that to the 6 minutes Sandra would spend processing the entire order manually. The AI did 90% of the work. The human does the 10% where human judgment is actually needed.

For a team processing 200 orders per day, this changes the math entirely. If 50% of orders need zero human review and the other 50% need review on an average of 1-2 line items each, the total human effort drops from 200 orders at 5 minutes each (16.7 hours) to roughly 100 orders at 30 seconds each (less than 1 hour).

Step 6: ERP-Ready Structured Output

Once all line items are confirmed, either automatically by the AI or manually by a reviewer, the order is pushed to the ERP as structured data.

The output for Martin's order looks like this:

| Field | Value |

|---|---|

| Customer ID | CUST-4471 (Martin Bauer, Bauer Haustechnik GmbH) |

| Order Reference | Email 2026-03-15-07:43 |

| PO Number | [none: free-text email, no PO reference] |

Line items:

| SKU | Description | Qty | Unit | Unit Price |

|---|---|---|---|---|

| FIT-BR-40-BLU | Brass Fitting 40mm Blue PVC Coated | 30 | pcs | EUR 4.20 |

| CU-PIPE-15-B | Copper Pipe 15mm Type B | 100 | pcs | EUR 12.50 |

| GV-DN50 | Gate Valve DN50 PN16 | 1 | pcs | EUR 87.00 |

| [+ 12 items from usual order] |

This data enters SAP, Microsoft Dynamics, Sage, or whichever ERP the distributor uses, through a standard API connection. No CSV uploads. No copy-paste. No re-keying.

The order confirmation email to Martin can be generated automatically from this structured data, sent for approval, or held for Sandra to personalize, depending on the workflow rules the distributor has configured.

What Happens With Non-Standard Inputs

Martin's email is a common format, but it is far from the only one. Here is how AI handles the edge cases that break traditional automation.

Multi-Language Orders

A customer in Strasbourg sends an order mixing French and German:

"Bonjour, ich brauche 50 Stuck von den Kupferrohren 22mm und aussi 10 raccords en laiton 3/4 pouce. Merci, Pierre"

The AI processes both languages in a single pass. "Kupferrohren 22mm" (German: copper pipes 22mm) and "raccords en laiton 3/4 pouce" (French: brass fittings 3/4 inch) are each matched to the correct catalog entries regardless of language. No language-specific templates needed.

"Same as Last Time" References

"Hi, repeat last Tuesday's order but skip the gaskets."

The AI retrieves the order from last Tuesday, reproduces all line items, and removes any items categorized as gaskets. The confidence score on the "skip the gaskets" instruction is typically high (0.90+) because the removal is explicit. The reproduced baseline items inherit the confidence of the historical match.

Handwritten Notes as Email Attachments

A warehouse manager photographs a handwritten list and emails the image. The AI applies OCR to extract text from the image, then feeds the extracted text through the same NLP interpretation pipeline described above.

Handwritten inputs typically produce lower confidence scores: a "3" that might be an "8," a product name with unclear characters. The system flags more items for human review on handwritten inputs, but the fundamental process is identical. The AI does the reading; the human confirms the edge cases.

PDF Purchase Orders From Structured Senders

Not every order is messy. When a customer sends a well-formatted PDF purchase order with clear SKU codes and quantities, the AI still processes it through the same pipeline, but nearly every line item scores 0.95+ confidence, and the entire order flows through to the ERP with zero human touch.

This is the advantage of a single pipeline that handles everything from handwritten notes to structured POs: the team does not need to sort orders into "easy" and "hard" piles. The AI handles the sorting by confidence score.

Real-World Validation

This process is not theoretical. At Meesenburg Romania, a building materials distributor processing orders from hundreds of customers, approximately 98% of AI-processed orders needed no modification after processing. 50% of orders were fully automated end-to-end with no human review at all. Read the full Meesenburg case study for deployment details and results.

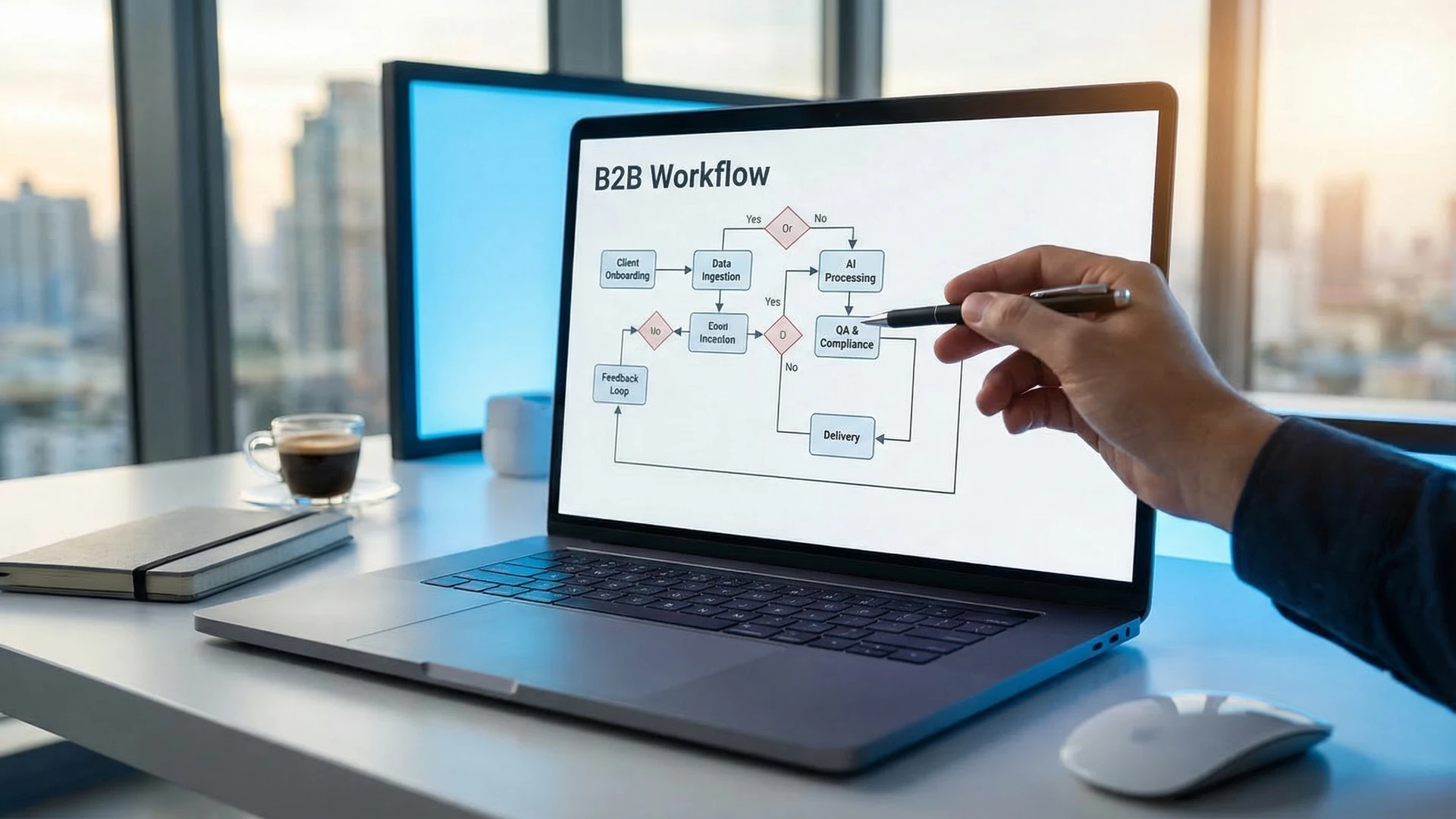

The Processing Pipeline at a Glance

For a quick reference, here is the complete pipeline:

- Inbox monitoring. AI watches the email inbox continuously, classifies incoming messages, and routes orders into the processing pipeline.

- Content interpretation. NLP reads and understands the email content, extracting order signals from any format or language.

- Product matching. Semantic matching connects customer descriptions, abbreviations, and nicknames to specific SKUs in your catalog.

- Each line item receives a confidence score. High-confidence items proceed automatically. Low-confidence items are flagged.

- Human review. Flagged items appear in a dashboard with the AI's reasoning and alternative matches. A reviewer confirms or corrects in seconds.

- Confirmed orders push to your ERP via API as structured ERP output. No re-keying, no CSV uploads, no manual entry.

The technical depth of each step varies by order complexity, but the pipeline is the same whether the input is a structured PDF or a photo of a handwritten list. That consistency is what separates AI order processing from the template-based tools that require a different configuration for every customer and every format.

What This Means for Your Order Desk

The shift is not about replacing the order processing team. It is about changing what the team spends time on.

Before AI processing, a CSR like Sandra spends 80% of her day on mechanical work: reading emails, finding SKUs, typing data into the ERP. The remaining 20% is the work that actually requires Sandra's experience: handling exceptions, managing customer relationships, spotting upsell opportunities.

After AI processing, that ratio inverts. The AI handles the mechanical 80%. Sandra's day is spent on the exceptions that genuinely need her judgment, on customer calls that build relationships, and on the work that makes her experience valuable rather than replaceable.

For distribution businesses exploring sales order automation, the technical pipeline described above is what runs underneath the product interface. The question is whether that pipeline can handle the specific formats, languages, and edge cases your customers send.

The fastest way to find out is to test it on your actual orders, not a demo dataset. Send the messiest emails your team received this week and see the output: line items matched to your catalog, confidence scores assigned, exceptions flagged. If it handles your hardest cases, everything else follows.

Learn how OrderFlow handles email order processing for distribution businesses.